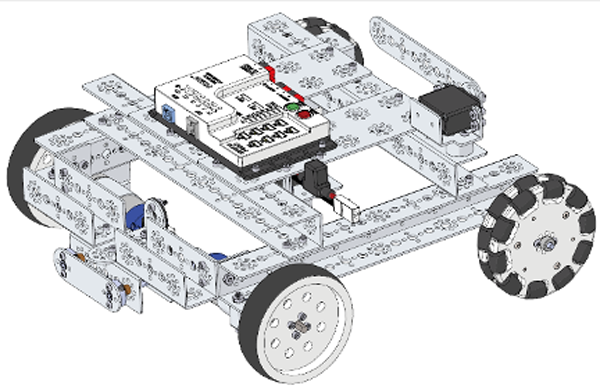

Everything is getting smarter these days – from smart devices to smart cars, from smart appliances to smart homes. Now, with the addition of these new sensors, your TETRIX® robots can become smarter as well. Sensors gather input about a robot’s environment and turn that input into data the robot can use.

Recently, Pitsco has developed TETRIX compatibility and support for four new sensors:

- Pixy2 camera

- Gesture/color/light/proximity: Adafruit APDS9960 Gesture Sensor

- Inertial measurement unit (IMU): Grove LSM6DS3 6-Axis Accelerometer & Gyroscope

- Tactile sensors: Grove Micro Switches, Grove Button(P)s, and Grove LED Buttons

What’s more is that most of these sensors have multiple capabilities, allowing for lots of different robotic applications. There are four main aspects of support material we’ve created for each sensor:

- TETRIX coding libraries for use with PRIZM® are installed in the Arduino Software (IDE) and provide the functions and commands necessary to use the sensor with PRIZM.

- TETRIX adapter boards that allow for easy connection between the sensor and PRIZM. Adapter boards are available for the Pixy2 camera and the gesture sensors. The IMU and tactile sensors should connect to PRIZM directly with no need for an adapter.

- TETRIX Extension Activities (TEAs) are free, downloadable activities that walk students through how the sensor works, background knowledge of STEM concepts related to the sensor, build steps for attaching the sensor to the TaskBot from the PRIZM Programming Guide, sample code and example sketches for using the sensor with detailed instructions on how the code works, and real-world connections of where these sensors are used.

- TETRIX Robotic Engineering Challenges (RECs) are free, downloadable, open-ended activities centered around using the sensor. Students must follow the provided criteria and constraints to design, build, and program their own robot to meet the challenge objectives.

While Pitsco is currently not selling these sensors directly, all of these support resources are available for free on our website at Pitsco.com/MAX-Sensor-Support.

No matter which sensors students use, they’ll be able to make smarter robots. Building smarter robots leads to learning about trending topics such as robot vision, machine learning, biometrics, artificial intelligence, control theory, and collaborative robotics just to name a few. So, smarter robots lead to smarter students. And smarter students make the decision to purchase these sensors a smart one! Let’s take a deeper dive into each sensor and its capabilities.

Pixy2 Camera

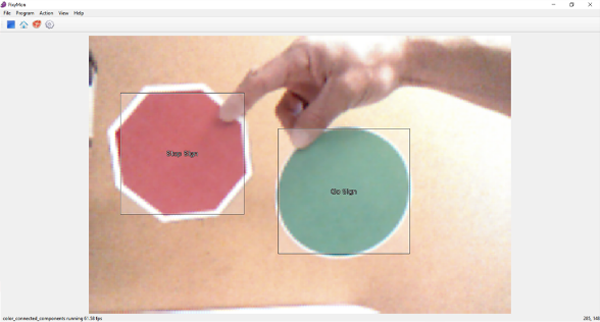

The Pixy2 camera gives vision to your robots. It has three different modes, allowing for a wide range of applications. With color connected components mode, the Pixy2 can be taught to recognize objects based on color, patterns, or bar codes. Pixy2 can return all sorts of data about the objects it sees, including the number of objects; how long it has been tracking each object; and the RGB color combination, relative size, and x-y position of each object in the frame. The Pixy2 also has an advanced line-tracking mode that recognizes multiple paths and intersections for the robot to follow. Pixy2 can be trained to recognize symbols or signs directing the robot to follow a particular path as it approaches an intersection. These paths can be made from contrasting color tape or drawn with markers or pencil. In video mode, Pixy2 can act as a color sensor, providing the RGB color combination of whatever object is in the center of its frame.

In the TEA for Pixy2, students attach the camera to the TaskBot and complete a couple different activities. They use line-tracking mode to program the TaskBot to follow a line through sharp turns and even imperfections in the line. Then, using color connected components mode, students train the Pixy2 to recognize different signs. The TaskBot is then controlled by what signs the camera sees. In the Pixy2 REC, students program a robot to track and follow a ball at a set distance. Wherever the ball goes, the robot follows.

While Pitsco doesn’t sell the Pixy2 camera, we do sell an adapter that allows you to easily connect the Pixy2 to your PRIZM controller. To purchase a Pixy2 camera, we recommend visiting CharmedLabs.com to find a distributor. For more information about Pixy2, visit the documentation wiki.

Check out this video to see an example of TETRIX TaskBots playing follow-the-leader using the Pixy2 camera.

Gesture Sensor

The Adafruit APDS9960 Gesture Sensor is like four sensors in one. As its name implies, it can detect basic hand gestures (up, down, left, right, near, far). It also has a built-in color and light sensor that can detect the intensity of the ambient light in the room or the RGB color combination of an object in front of it. Using an infrared transmitter/receiver, it can also measure the proximity of an object within about 40 centimeters.

The gesture sensor TEA walks students through example sketches of using the sensor for proximity sensing, color sensing, and gesture sensing. In the final activity, students attach the sensor to the TaskBot and use gestures to control the movement of the robot. In the gesture sensor REC, students are tasked to design, build, and program a robotic gesture-controlled surgical arm.

Pitsco does sell an adapter board to easily connect the Adafruit APDS9960 Gesture Sensor to PRIZM. The gesture sensor itself can be purchased from Adafruit.com.

Inertial Measurement Unit (IMU)

Like the gesture sensor, the IMU is a combination of sensors as well. The Grove LSM6DS3 6-Axis Accelerometer & Gyroscope measures acceleration and g-force data in three axes (x, y, z) while the gyroscope measures rotational motion around these same axes (pitch, roll, and yaw). Data from the accelerometer and gyroscope can be combined to filter out vibrations and drift to get accurate measurements of a robot’s movement. The IMU also has a built-in temperature sensor that can output temps in Fahrenheit or Celsius.

In the IMU TEA, students start with an example sketch that outputs data from the sensor in various forms such as raw data, rate of change, g-force, angle of movement, pitch-roll-yaw, and temperature. Then, students attach the IMU to the TaskBot and upload an example sketch where the accelerometer is used to detect the change in acceleration after a crash and the gyroscope is used to make a perfect 90-degree turn to head in a new direction. In the IMU REC, students are challenged to use the IMU to create a smartphone camera stabilizer that keeps the camera pointed in the same direction as the holder moves and rotates.

The Grove 6-Axis Accelerometer & Gyroscope can be purchased from SeeedStudio.com.

Tactile Sensors

There are several different types of tactile sensors. However, the TETRIX Tactile Library, TEA, and REC are developed around digital tactile sensors. Examples include push buttons and lever switches that turn on when pressed and off when released. Tactile sensors can be used as input devices, limit switches, or contact detection.

The tactile sensor TEA starts with a simple example sketch exploring how the sensor works as students monitor the digital output of the sensor while it’s pressed/released. Students complete a series of build steps to create a spring-loaded bumper for the TaskBot. Next, students explore an example sketch for crash detection. In a crash, the bumper presses the tactile sensor, alerting PRIZM that a crash has happened. The TaskBot then backs up and changes direction. The REC for the tactile sensor challenges students to build a tactile gripper that squeezes an object until sensors in the gripper’s fingers are pressed, giving the robot a sense of feeling.

Tactile sensors that are compatible with PRIZM can be purchased at SeeedStudio.com. Examples include Grove Micro Switches, Grove Button(P)s, and Grove LED Buttons.

We hope this overview is helpful. We’d love to hear from you as you implement these sensors on your bots.

TOPICS: Competitions, ROBOTICS, FIRST Tech Challenge, Technology, Engineering, Coding, Activities, TETRIX Robotics, Hands-on Learning